A Critical Review of the White House's Executive Order on Artificial Intelligence

As the Biden Administration outlines its AI policy, requiring companies to share tests, results, and embrace responsible AI, there is also risk of government overreach and stifled innovation.

In the past half-decade, AI has surged from computer science textbooks to an omnipresent force in our lives, prompting governments worldwide to act. The Biden administration's recent executive order emphasizes ensuring safety and fairness, promoting innovation, and fostering international cooperation. There are many ways to regulate AI but it is inherently difficult to enforce such regulations. They will be enacted across a variety of different timescales, but a general theme is getting reports, tests, and putting in checks against private companies. Considering the numerous ways Big Tech has used data and AI, they are the primary subject of this order.

This executive order builds upon the "Blueprint for an AI Bill of Rights" from 2022, spotlighting equity amidst AI's rapid advancements. While the order lays down the law, the Biden administration acknowledges the necessity for more comprehensive, bipartisan legislation for holistic AI governance, underscoring one of the most specific and relevant approaches to AI policy. The result could be something like General Data Protection Regulation (GDPR for the US in its entirety. You can read more about this and other relevant regulations from the the recent years here.

This proposal seeks to establish governance for the development and deployment of AI systems, focusing on protecting individual rights and promoting transparency, accountability, and fairness. Leading up to this, there have been state and local laws in the US (United States) include the California Consumer Privacy Act (CCPA) and the Illinois Biometric Information Privacy Act (BIPA). There have also been bans on facial recognition and robotic police units in states like Los Angeles. For a long time, I have wondered when AI law will align with fairness and discrimination laws, such as Civil Rights, ADEA (Age Discrimination in Employment Act), and ADA (Americans with Disabilities Act). In another newsletter I dive into the topic of bias and fairness, something related to my work as a Data Scientist.

The executive order appears to address this but the details are not ironclad. Specific tests and metrics will be used and those may vary depending on the industry, experts and context at hand. Otherwise, there is also the risk, as always, of government overreach. If they outright decide the standards through which AI technology is judged, they are asserting a moral authority which may need to be scrutinized, for the good of the public. At the same time, unfettered big tech has lead to many societal disasters, outlined in later parts of this letter. There needs to be precision in how AI regulation is approached, in how we curtail big tech.

The executive order mandates companies, especially those whose AI models could jeopardize national security, economic stability, or public health, to share safety test results with the federal government. This directive, aligned with the Defense Production Act, necessitates that companies developing foundational models posing serious national security risks notify the federal government during the training phase, sharing red-team safety test results. This proactive move, unprecedented in its breadth, pulls in departments from commerce and energy to homeland security, reflecting a holistic approach to AI governance. The order's focal points underscore AI's myriad challenges: from consumer privacy and equity to potential monopoly-like dominance due to a need for data to train it. There’s a specific emphasis on "algorithmic discrimination" in sectors like housing and healthcare, aiming to ensure fairness and equality in the AI-driven ecosystem.

The order serves as a clarion call to Congress, urging them to pass bipartisan data privacy legislation, with a particular emphasis on protecting children. In a world where deepfakes and AI-generated content can blur lines between reality and fiction, the Commerce Department is now tasked with creating watermark guidelines to combat deception.

Deepfakes, the product of sophisticated artificial intelligence algorithms capable of generating eerily realistic images and videos, have experienced a rapid rise in both prevalence and quality over the past 5 years. This surge in questionable uses of AI technology has sparked widespread concern, with a clearinghouse documenting a staggering 260 incidents in 2022 – a twenty-six-fold increase compared to just ten years prior. Among the most alarming cases are a deepfaked video of Ukrainian President Volodymyr Zelenskyy urging citizens to capitulate to Russian forces, a blatant attempt to manipulate public opinion and undermine national sovereignty. Even today, propaganda of a new order may already be sowing the seeds of future wars and conflicts.

Moreover, the order echoes broader concerns, addressing areas such as immigration, microchip manufacturing, telecoms, education, copyright, and labor, showcasing an understanding of AI's wide-ranging impacts. For instance, it aims to streamline visa applications for immigrants working on AI and other critical technologies, addresses the semiconductor industry crucial for AI development, and encourages the Federal Communications Commission to explore how AI may improve telecom network resiliency and spectrum efficiency.

Internationally, the Biden administration has actively engaged with the EU to harmonize AI regulation efforts, signifying a move towards collaborative global governance rather than competitive stances. This international outlook highlights the administration's cognizance of AI as a global phenomenon requiring coordinated regulatory frameworks.

This order pushes, at least on paper, companies towards fairness and accountability, particularly emphasizing sectors like housing and healthcare. Even if this is laudable, the order omits details about granular stages of AI model development. AI's life cycle starts with data collection, a stage that can already introduce biases such as sampling bias, measurement bias, and algorithmic bias. For example, training data in hiring algorithms, if not carefully curated, can favor candidates from certain backgrounds, thereby perpetuating existing inequalities.

It's essential to recognize that there are numerous stages in an AI initiative, from data collection, modeling, to deployment. Furthermore, even after a model is deemed fair, its continuous deployment in a dynamic world means it needs to be perpetually re-evaluated. Incoming data from different groups or changing societal norms can drift away from the data the model was initially trained on. It’s worth mentioning the order discusses watermarks, which has been widely explored in various legal directives. The ability to detect and identify the source of an AI-generated media or data point is invaluable- although, highly difficult to apply to creative writing.

The ripple effects of this order are expected to be felt across the tech industry. Giants like Google, Amazon, and Microsoft might experience tremors, especially if they are developing foundational AI models with significant risks. The emphasis on transparency, a departure from the tradition of companies closely guarding their AI designs, is particularly notable, especially for AI systems with wide-reaching implications.

Who stands to be affected? The net is wide. From AI development companies and tech investors to federal departments and the general public, the implications are profound. The general populace gains protection from AI deception, discriminated groups find a champion against algorithmic biases, and workers, especially in the AI sector, might experience disruptions. The order comes with guidelines supporting various segments, from educators using AI tools to landlords, ensuring AI doesn’t exacerbate housing biases.

However, challenges loom. Effective enforcement remains elusive, and the order's broad scope means that it might not capture every AI nuance. While international collaboration is highlighted, seamless global coordination is intricate. On the technological front, the push for transparency might reshape AI development priorities and possibly decelerate the launch of some AI products to ensure compliance. Consumers, armed with enhanced safety measures, might shift their.

The order specifies how it will improve public data access and manage security risks, in order to expand public access to Federal data assets in a machine-readable format while also taking into account security considerations. This includes a public report with relevant data on applications, petitions, approvals, and other key indicators of how experts in AI and other critical and emerging technologies have utilized the immigration system through the end of Fiscal Year 2023. The value of this can’t be understated- this means more transparency and resources for which the public can make use of.

The Executive Order on Promoting Competition in the American Economy could potentially have implications on how AI and creative arts intersect, especially in Hollywood. The ascent of AI-art generation has pitted technological innovation against artists' interests, amidst legal ambiguities concerning copyright, ownership, and usage. The unfolding scenario in Hollywood, marked by strikes and contentious negotiations, illuminates pressing concerns around artists' rights, especially in the face of AI-driven scriptwriting and performance simulation. It highlights the imperative for robust policy frameworks that navigate the intricate dynamics between AI, labor, and creativity, addressing legal and ethical frontiers. Notably, the discussions invoke pertinent legislation like the U.S. Copyright Act and recent case law, illustrating the evolving narrative around creative contributions, copyright infringements, and the nuanced role of AI in artistic domains. The dialogue also echoes broader societal needs for transparency, consent, and a balanced approach towards AI adoption, amidst the backdrop of burgeoning AI companies, evolving governmental regulations, and the persistent quest for a harmonious co-existence of AI and human creativity. Dive deeper into these issues by exploring AI Art & Copyright and The Creative Clash: AI vs Hollywood to learn more.

From the housing market, where AI tools are being calibrated to eliminate biases and improve underwriting models, to the healthcare sector, where the careful integration of AI aims to reshape public health and human services, the landscape is rapidly evolving. Educational institutions are being guided towards harnessing AI for inclusivity, ensuring that even the most vulnerable communities benefit. Meanwhile, in the bustling world of telecommunications, the fight against AI-driven robocalls intensifies, and efforts to secure communication networks deepen.

When it comes to healthcare, the White House's executive order prioritizes safety, efficacy, and fairness in AI. Emphasizing the deployment of AI tools that are innovative yet safe for patient care, it underscores the significance of continuous evaluation, given AI's dynamic nature. This vigilance extends to addressing biases, aiming to ensure equitable outcomes and prevent discrimination, particularly in areas like drug development and diagnostics. In a note, I discuss the unique limitations of the order in the healthcare space.

The directive set by the Secretary of Commerce, in collaboration with other officials, establishes specific computational thresholds. These thresholds seem to be set with an intention to monitor and regulate the development of exceptionally advanced AI models, possibly even attempts towards creating an Artificial General Intelligence (AGI). For context, GPT-4, one of the most advanced AI models to date, was trained using a computational power that falls far below the figure issued in the order.

The order mentions ways to actively use AI to achieve goals for sustainability from a climate and energy perspective. In the previous paragraph, the computational requirements needed for a model (such as an AGI) would demand considerable energy resources. Given the energy grids and the monitoring mechanisms in place in many countries, it would be challenging for such large-scale operations to remain undetected due to the massive energy consumption. In another branch, the order includes a distinct computational threshold for models focused on biological sequence data. Biological data, particularly human genomic sequences, offers a window into the most intimate aspects of an individual, revealing potential susceptibilities to diseases, ancestral origins, and in some instances, even behavioral tendencies. The misuse or misinterpretation of this data could lead to unparalleled invasions of privacy, potentially altering life outcomes based on predetermined genetic markers. As biotechnological tools like CRISPR revolutionize our capabilities, integrating AI could exponentially amplify these advancements.

When regulating or criminalizing something, you increase the risk on behalf of those you would enforce against. In the same stroke you increase or preserve the value of the good or service they offer. You will eliminate those bad at evading and hiding, leaving only those who can circumvent your surveillance. During the Roman Empire, high tax rates led many to abandon their lands and become "tax outlaws." The more the government tried to clamp down, the more creative people became in evading the system.

The enforcement of the tests, is one thing, but what about the practices of the company that could lead to murky or misleading results? After all, machine learning models are often uninterpretable and there are different ways to measure things. There's the possibility of organizations using synthetic data — artificially created data that mimics real-world data. Were they to use this in the government’s testing, it may obfuscate the monitoring process.

The order, in the section discussing the principles of “Protecting Privacy”, states that agencies shall use available policy and technical tools, including privacy-enhancing technologies (PETs) where appropriate, to protect privacy and to combat the broader legal and societal risks — including the chilling of First Amendment rights — that result from the improper collection and use of people’s data. Privacy is an unsolved problem, especially when the value of data sharing is so high. Being able to use data while preserving individual privacy is the topic of PETs. Read my below note to learn more about PETs. To learn more about the topic of synthetic data as a PET, which has many unique strengths, you can check out my talk for the Alan Turing Institute.

The White House does not address particular stages of an AI model’s development, which begins with the data collection. To build more inclusive AI models, we must first recognize and address different types of biases that can manifest even at the onset, such as sampling bias, measurement bias, and algorithmic bias. For instance, the biased training data in hiring algorithms may favour candidates with certain backgrounds, exacerbating existing inequalities in the job market. There are many stages when creating an AI initiative, from data collection to modelling to deployment. Even after a model is assessed for fairness, it needs to be continuously deployed. The new stream of data, from a different sample or group, may be different from the sample the model was trained from. The world itself is constantly changing.

Strategies for mitigating bias include increasing the diversity of training data, involving experts from various fields in the development process, and applying fairness metrics to assess and correct model outcomes. This is why I am committed to sharing information and workshops on these topics, in order to garner more interest in understanding and building systems to ensure fairness in AI. In his work on decision-making, psychologist Daniel Kahneman highlights the concepts of "noise" to emphasize the importance of recognizing and addressing variability in judgments and predictions. It’s crucial that any safety tests consider that what appears to be a product of bias may rather be a product of noise.

Cultivating a global AI ecosystem that benefits everyone is like planting a diverse garden. It requires the collective effort of developed and developing nations to nurture and sustain growth, ensuring that AI technologies reflect the needs and experiences of people worldwide. The AI for Good Global Summit, organized by the International Telecommunication Union (ITU) and other partners, brings together governments, businesses, NGOs, and academia from around the world to discuss ways to harness AI's potential for the benefit of all, including addressing the challenges faced by developing countries.

It is not enough to consider equitable or fair AI in terms of people and not the environment. Sustainability is the intersection between social and environmental vectors. Also, as environmental challenges and hazards rise, marginalized groups will be disproportionately affected. While training large AI models continues to have an outsized carbon footprint, evidence suggests that this could change. In 2022, training BLOOM emitted as much carbon dioxide as an average human would in four years. In 2020, training GPT-3, which is around the same size, emitted more than an average human would in 91 years.

The Executive Order on AI, amidst the U.S.-China tech rivalry, underscores the importance of microchip manufacturing, crucial for AI. While not directly addressed, it complements the CHIPS Act's aim to revive domestic semiconductor production. The act embodies a response to the supply chain vulnerabilities exposed during the U.S.-China tech rivalry and the pandemic, targeting a revival of domestic chip manufacturing capabilities to secure a reliable supply chain, stimulate innovation, and foster economic growth.

In the same vein as the Executive Order, the DATA Act and the RESTRICT Act contribute to a wider tech policy framework, addressing data management and tech regulations respectively, forming a multi-faceted approach to secure U.S. technological leadership and navigate digital innovation's complexities. Each act or order, though distinct, interlinks to address the broader goals of economic competitiveness, privacy, and security in the tech domain, requiring the President’s approval.

Executive orders are publicly presented as solving a problem, but often come with far-reaching implications, well beyond the initial scope of what was proposed. Earlier this year, the topic of the banning of TikTok made its way to the US Congress. Since then, the White House has endorsed the RESTRICT Act earlier this year. Effectively, the bill gives the executive branch of the government national security powers, to monitor, control and restrict commerce in information and communication technologies. Elaborating on this in a note, I discuss further the implications of this and other such Acts leading up to this executive order.

While the use of AI for things like say, making medicines or generating media, are arguably harmless, the use of AI in the military is inarguably not. Yet, the US, with its military budget of $858 billion next year, is not likely to slow down on that front. The Pentagon has an unending investment into newer and more powerful weapons. Not only are sales of conventional arms profitable, but so are the sale of nuclear ones too. When AI becomes weaponized, it becomes profitable, and there’s no stopping that juggernaut. When it comes to the military, what is the administration’s position? There is a gap in the order here, where it makes no mention of how AI would be restricted and checked in a military context. In another letter, I expose how AI is used in military operations by a company called Palantir, a reference to the “all-seeing eye” used by the main villain from Lord of the Rings.

The United Nations (UN) emphasizes the imperative of human judgement in any decision that involves taking human life. Accordingly, the U.S. Department of Defense has set requirements for its AI systems to be responsible, equitable, traceable, reliable, and governable. While admirable, the obvious question is raised: is this sufficient to prevent military misuse? Just by addressing the user interface, Andrew Ng of Deeplearning.ai points out that the design of user interfaces often leads individuals to passively accept automated decisions, analogous to the prevalent "accept all cookies" pop-ups on websites.

So while technically, an automated system might align with the UN directives, it may also present a time-sensitive decision to its human operator without adequate context, leading to hasty authorizations can occur, lacking the required oversight or discernment. In a message to the Group of Governmental Experts, the UN chief said that “machines with the power and discretion to take lives without human involvement are politically unacceptable, morally repugnant and should be prohibited by international law”. Despite the UN chief’s assertion that autonomous weapons are "politically unacceptable, morally repugnant and should be prohibited by international law.”

Presently, leaders from 30 countries endorse a global prohibition on autonomous weaponry. However, major powers like China, Russia, and the U.S. have so far stalled these initiatives. Given the decreasing cost of drones and the simplification of AI development, there are fewer barriers than ever for a determined adversary to leverage these technologies. Powerful nations such as China, Russia, and the U.S. have yet to lend their support to an international ban.

The New York-based company Clearview AI was revealed to have collected billions of photos from the internet, including social media sites, to develop a facial recognition database. This raised public discourse around privacy, data security, and the potential for the tech to be misused for law enforcement or commercial purposes. The Detroit Police Department used facial recognition technology to arrest Robert Williams, an African American man, for a crime he did not commit. The technology produced a false positive, leading to his wrongful arrest and the violation of his rights. There are a number of other cases of Black men being falsely arrested due to facial recognition, as well as tracking the behaviours and patterns of Black Lives Matter protesters. That same year, the FBI was using a facial recognition database that contained millions of photos, including those of people who had not been convicted of a crime. This demonstrates the high risk of the tech to be misused for political purposes. In 2021, Amazon was criticized for selling its facial recognition technology, Rekognition, to law enforcement agencies, leading to concerns about the potential for the technology to be used for mass surveillance and racial profiling from Civil Rights unions. In a previous letter, I shed the light on this problem in more detail.

Many US governmental agencies, especially law enforcement, have engaged in highly questionable uses of AI. The FBI and other government surveillance groups have already partnered with or used Clearview’s algorithms. However, this government order may finally serve to regulate Clearview. There are no checks on law enforcement searches of the database that could reveal detailed information about people's lives and associations. It could be used for tracking immigrants, targeting protesters, or harassment of individuals. In a specific letter targeting Clearview, I discuss some of their sins, when it comes to people’s privacy and their questionable approach to bias and fairness.

Some states, such as California, have been banning these technologies for law enforcement. Across the globe, this tech has become commonplace in Russia, India, Iran, the United Arab Emirates and Singapore. But according to The Times, around half of the world’s nearly one billion surveillance cameras are to be found in China. This figure of a billion devices will only increase. After all, they get better with more training data, even if it makes life worse for many innocent people.

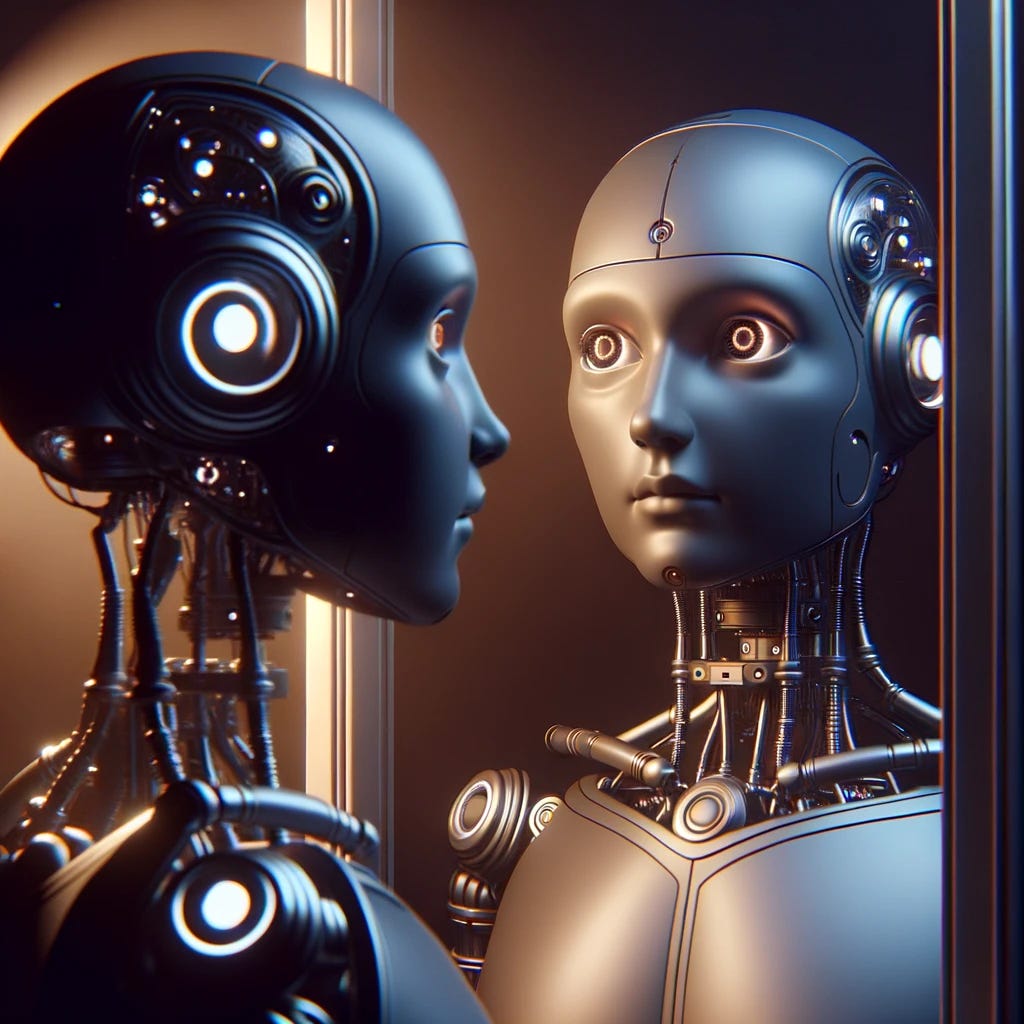

The concept of rights for AI has gained traction as AI becomes increasingly integrated into society, prompting discussions around the personification of AI and its ethical treatment. Anthropomorphizing machines and regarding AI as a new "species" in the digital ecosystem has raised the question of whether AI should be treated with respect and dignity, much like an integral thread in the tapestry of human society.

As AI continues to develop, its potential for consciousness becomes a central topic of debate. The line between human and artificial intelligence grows increasingly blurred, with AI exhibiting learning, reasoning, and creative capabilities that mirror human intellect. This development may eventually lead to AI achieving consciousness, evolving like a budding flower with each technological advancement. To explore this idea further, consider checking out one of my first newsletters, exploring philosophy, psychology and collective intelligence.