Artificial Intelligence: Policy and Legal Review (2023)

Recent discussions from the Biden and Californian administrations recognize AI's transformative power. As AI embeds itself in policy-making, governance faces a digital renaissance.

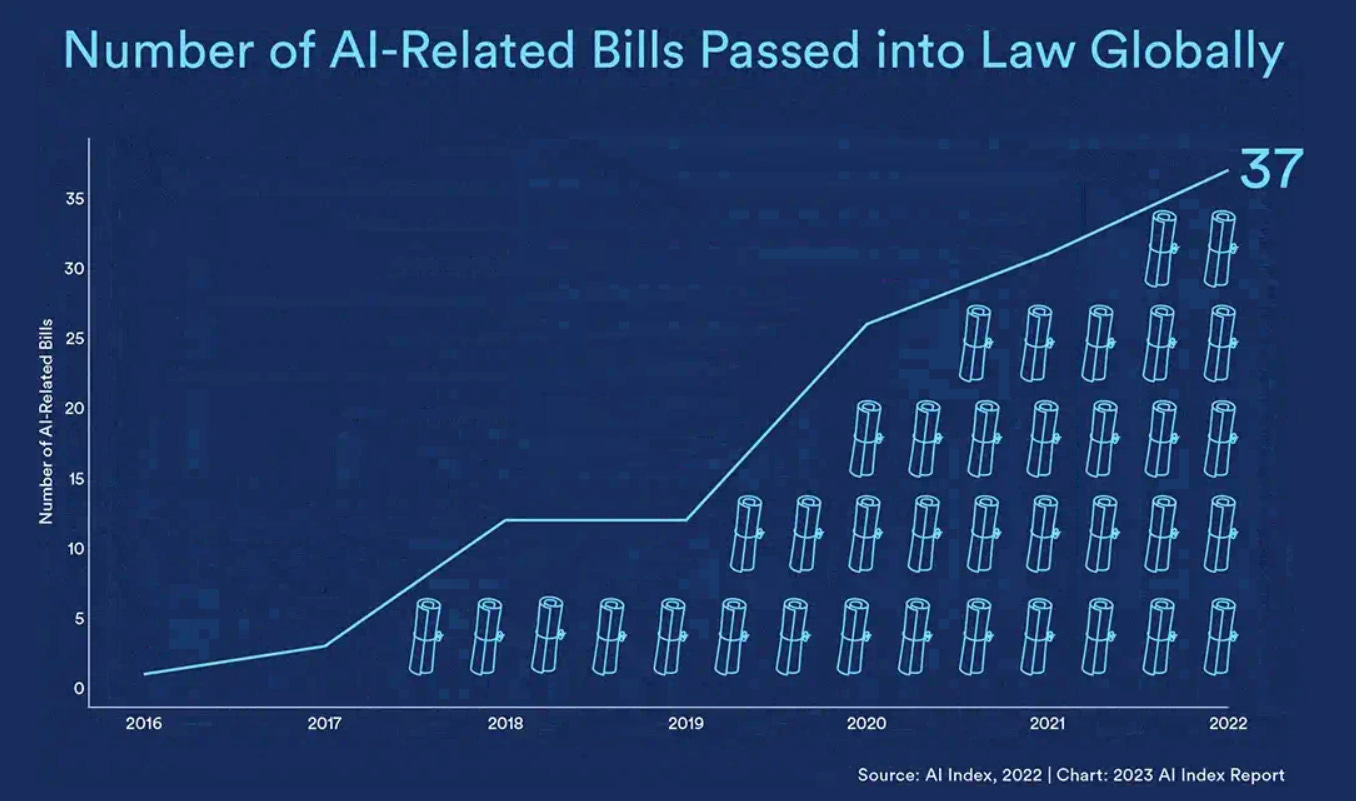

Over the past five years, government scrutiny of Artificial Intelligence (AI) has reached the point of global regulatory action. A volley of AI-focused laws and regulations has been unleashed. Among these are the EU Artificial Intelligence Act (AI Act), and the proposed US AI Bill of Rights.

AI's influence has grown beyond just computer science textbooks, now reflecting historical technological revolutions. As chatbots like ChatGPT become more integrated into our daily lives, it also presents threats: disrupted industries, mass unemployment, and surpassing human cognition. White House AI Accords involve industry giants like Adobe, IBM, and Nvidia pledging allegiance to AI initiatives. AI is increasingly recognized as a sociopolitical force, with global commitments varying. It’s concerning that people influencing the law are the very people the law should be protecting us from: big tech.

The trend from 2016 to 2022 is salient. There are more bills accumulating, so don’t expect this to slow down over the coming years.

The European Union's AI Act, the first major AI regulation, successfully overcame significant obstacles on its way to becoming law. The AI Act is expected to set a crucial precedent for future risk-based regulatory approaches not just in Europe, but worldwide. This comprehensive legal framework aims to ensure that AI technologies are used responsibly, transparently, and in a manner that respects human rights. In the United States, there is currently no comparable governance framework to the EU's AI Act.

Read this letter to learn more about AI laws and fairness concerns in AI.

Fairness, the equal treatment and opportunity for all, is a necessary consideration for real world AI systems. In this context, various laws, such as Title VII of the Civil Rights Act of 1964, the Age Discrimination in Employment Act (ADEA), and the Americans with Disabilities Act (ADA), protect specific classes of individuals from discrimination based on race, colour, religion, sex, national origin, age, or disability.

By crafting and enforcing policies that align AI systems with fairness and discrimination laws, such as Title VII, ADEA, and ADA, we can foster an environment where AI serves as a harmonious and supportive thread within the societal web, contributing to the well-being and fair treatment of all. This is not only a way to make society better and more inclusive but may be a legal regulation from various levels of government, especially from countries with EU influence. Individual states and government departments are drafting up proposals too, consulting with experts and building panels to ideate a possible regulatory roadmap.

This important progress must not come at the price of civil rights or democratic values, foundational American principles that President Biden has affirmed as a cornerstone of his Administration. The President ordered the full Federal government to work to root out inequity, embed fairness in decision-making processes, and affirmatively advance civil rights, equal opportunity, and racial justice in America. This has been reflected in the proposed blueprint and bill, but it doesn’t mean they are making effective change just yet.

The danger of AI regulation lies in the potential agenda of government personnel and lobbyists. Acts may emerge that stifle innovation, as well as individual freedom to use and develop AI.

Read this to learn more about recent Acts that have been put in place to control and restrict technology, for good or for evil.

The GDPR is a landmark legislation on digital privacy. Its principles provide a foundation for data collection and processing discussions. The UAE's AI ambitions aim for global supremacy, yet fail to provide clear insights into their stance on AI surveillance or privacy. It gets mote complicated and varied across different regions.

From China's "Socialist Core Values" for AI to Brazil's comprehensive AI law, nations are racing to regulate AI. Japan promotes "Society 5.0", aiming to align AI across all sectors. Italy opts for stringent regulations. Israel focuses on "responsible innovation" with an emphasis on human dignity. As tech giants align their futures with the White House, the appetite and acceleration of AI is inevitable. But is the co-operation of big tech and the government always in the interest of the public?

Clearview AI has amassed an unparalleled database of facial images, without knowledge or consent. With over 30 billion photo, their use of this data reveals an unprecedented erosion of civil liberties. Drawing intense global scrutiny, the company grapples with mounting legal challenges from the US, Canada, and numerous European nations. It forces us to confront the boundaries of technological overreach. Given the connection between Clearview and state police, real risks are raised for marginalized groups. They may be targeted, or, at the very least, suffer disproportionately from tech-driven policing. This would go against the principles outlined in the AI “Bill of Rights”.

Palantir Technologies develops cutting-edge software leveraging AI, making notable impacts across different sectors, from intelligence to cybersecurity. Their active role in warfare and the perspectives of their leadership prompt deep concerns for human rights, responsibility and accountability.

Read this to learn more about the use of AI in the military.

The United Nations (UN) emphasizes the imperative of human judgement in any decision that involves taking human life. Accordingly, the U.S. Department of Defense has set requirements for its AI systems to be responsible, equitable, traceable, reliable, and governable. There ability to enforce these ideals remains to be seen. The complexity of these systems, and the limitations of training them, results in dangerous edge-cases.

Read this to learn more about how AI can make us vulnerable due to overreliance.

Governments worldwide have taken steps to address the growing influence and implications of AI, with notable regulatory measures. While AI brings opportunities, it also has a way of reflecting and amplifying our existing biases and flaws. Even when it solves a problem, it creates several new ones that must be addressed. It’s a multi-headed hydra. It also means there will be a drastic redistribution of wealth. Not enough people have the skills needed to retain a job in ever automated economies. The polarity between classes, the gap between the lower and middle, is likely to grow fatter. This could lead to something as drastic as societal collapse, for some parts of the world.

In a previous newsletter, I explored copyright law and how it might apply to AI generations. Since then, a variety of lawsuits and other evolutions have occured. Look out for a separate piece reflecting on this subject. It is important for artists and other creatives to have representation in these rulings. For now, you can read my post for an overview of key cases in AI and copyright law.

I’m happy to announce I am hosting a workshop in Belfast, Ireland. It’s for the One Young World Summit, where I’ll be coaching leaders and activists from all over the world on scrutinizing AI for bias and fairness considerations. If you support what I do, please share this post with your friends, colleagues or general social media. Thank you!