The Need for Equitable Artificial Intelligence

Embrace Equitable Artificial Intelligence for good. For each according to their need, from each according to their ability.

Table of Contents:

Introduction

Famous Controversies in AI and Fairness

Achieving Fairness with Data Centricity

Law and Fairness in AI

Influence of Geography and Class on AI Adoption

The Challenge of Representation

Sustainable AI

Addressing Bias in AI

Mitigation of Bias in AI

Noise versus Bias

Agony of Deepfakes

Rights for AI as a new species

Conclusion

Introduction

Artificial Intelligence has been so quickly integrated into our society, that it obfuscates the future of our civilization.

Based on an index from benchmarks, papers, market research, job listings, and polls from 2022, fifty percent of companies reported that they had adopted AI in at least one business unit or function. Just earlier this year, ChatGPT crossed 100 million users, making it the fastest-growing consumer application in such a short space of time.

Big data, machine learning and deep learning have enabled technology to surpass human capabilities in many domains. While this has lead to precedented growth and progress, there is a darker reality as well. AI has inadvertently become a weapon of math destruction. In other words, it is an algorithmic system, with an objective function that cannot fit with a real human value or judgement. It is a lower dimensional view of reality and depends on the underlying data. This is a snapshot in time and space, and for the most part, of a fractional sample of a population.

As automated decision-making systems become more tighly wound into society, issues of bias, inequality, and unforeseen consequences emerge. If we consider the worst case scenario, we may need to start using Asimov’s Laws of Robotics. This would make an AI comply with principles such as ‘a robot shall not harm a human, or by inaction allow a human to come to harm’. The anology goes deeper when you consider the prompts used in ChatGPT, to direct its behaviour and where it samples from.

In this newsletter, we will explore the complexities of data collection, culture, and design for AI systems, ensuring that they serve as an instrument of good (or at least neutral) rather than perpetuating harm.

Famous Controversies in AI and Fairness

The outcomes experienced by members of certain demographics can often be attributed to the complex nature of societal structures. There may be disparate impact depending on demographic status. In this list, we explore how different AI systems have impacted different groups across gender, ethnicity and geography.

Lack of Inclusivity in AI

SAN FRANCISCO, Oct 9

Computer science has for the first time become the most popular major for female students at Stanford University, a hopeful sign for those trying to build up the thin ranks of women in the technology field.

Based on preliminary declarations by upper-class students, about 214 women are majoring in computer science, accounting for about 30 percent of majors in that department, the California-based university told Reuters on Friday.

Meanwhile, there has been some progress. Women receive 22 percent of new North American bachelor’s degrees in computer science, up from 10 percent a decade ago.

Gender diversity has been a noticable issue in data science, computer science and artificial intelligence. Although the AI field at large is largely populated by women, as developers, researchers and content creators, the average programmer is usually male.

Law Enforcement

When we unlock our smartphone, it feels effortless. A simple swipe, a fingerprint scan, our voice. Our phones and apps like Facebook apply machine learning on our photographs. This is the primary use of the technology. Especially if you consider the critical role AI will soon play in law enforcement surveillance, airport security screenings, and decisions related to employment and housing.

Law enforcement utilizes facial recognition to match suspects' images with mugshots and driver's license photos. It is estimated that nearly half of all American adults—over 117 million people as of 2016—are part of a facial recognition network used by the police. This inclusion happens without consent or even awareness, and is exacerbated by insufficient legislative oversight. More alarmingly, the current application of this technology exhibits significant racial bias, particularly against Black Americans. Even with perfect accuracy, facial recognition could reinforce a law enforcement system already plagued by racial and anti-activist surveillance, further perpetuating existing inequalities. Additionally, U.S. prisons have been using AI to transcribe prisoners’ phone calls, and have used a gang-tracking tool criticized for having a racial bias.

Faulty Facial Recognition

While facial recognition algorithms claim high classification accuracy (above 90%), the results are not consistent across all demographics. Numerous studies reveal varying error rates among different demographic groups, with the lowest accuracy consistently observed among female, Black, and 18-30-year-old subjects.

In the groundbreaking 2018 "Gender Shades" project, researchers employed an intersectional methodology to evaluate three gender classification algorithms, including those developed by IBM and Microsoft. Participants were divided into four groups: darker-skinned females, darker-skinned males, lighter-skinned females, and lighter-skinned males. All three algorithms exhibited the poorest performance on darker-skinned females, with error rates up to 34% higher than those for lighter-skinned males. The National Institute of Standards and Technology (NIST) corroborated these findings, determining that facial recognition technologies across nearly 200 algorithms are the least accurate for women of color.

Recividism

The ProPublica investigation, spearheaded by Angwin and her team, revealed significant racial biases within the COMPAS recidivism prediction algorithm. Their analysis showed that African American defendants were more likely to be inaccurately labeled as high risk compared to white defendants with similar criminal records. The study found that African American defendants were nearly twice as likely to be falsely predicted as future repeat offenders, while white defendants were more likely to be misclassified as low risk despite reoffending.

A multitude of social forces contribute to repeated offenses, including the cyclical nature of prison and factors present in environments where there is a higher need for police presence and precautionary measures. For instance, police may be called more frequently in African American neighborhoods compared to wealthier ones, which can impact the experiences and outcomes of individuals in these communities.

Predictive Policing

Research demonstrated that the predictive policing algorithm, PredPol, led to a significant over-policing of minority neighborhoods. The results indicated that the algorithm disproportionately targeted these communities, amplifying the number of police deployments in those areas. Furthermore, research has shown that police are more likely to be called to predominantly black neighborhoods than to white neighborhoods, which could contribute to the biased outcomes generated by predictive policing algorithms. These confounding factors, such as socio-economic disparities and historical patterns of discrimination, further exacerbate the biases already present in the criminal justice system.

Recruitment tool

In 2018, Amazon scrapped an AI recruitment tool after it exhibited gender bias against female candidates. The AI system had been trained on resumes submitted over a ten-year period, which predominantly came from men. This led the algorithm to favor male applicants, casting a shadow on the fairness of AI in human resources.

Healthcare access

A study published in Science in 2019 revealed that an AI system used in healthcare to predict patients' needs for extra medical care was biased against African American patients. The algorithm assigned lower risk scores to black patients with the same level of health as white patients, resulting in reduced access to vital care programs for a historically marginalized community.

Black patients had to be deemed much sicker than white patients to be recommended for the same care. This happened because the algorithm had been trained on past data on health care spending, which reflects a history in which Black patients had less to spend on their health care compared to white patients, due to longstanding wealth and income disparities. While this algorithm’s bias was eventually detected and corrected, the incident raises the question of how many more clinical and medical tools may be similarly discriminatory.

Education

While it is possible AI can improve the education system, the opposite is quite possible too. The issues surrounding the use of student test scores to evaluate teachers have been widely debated. Critics argue that relying on standardized test results to measure teacher performance can be misleading and ineffective. Factors such as socio-economic background, parental involvement, and student motivation can significantly impact test scores, making it difficult to isolate a teacher's direct influence on student outcomes. Additionally, the pressure to improve test scores may encourage teachers to focus on test preparation rather than fostering critical thinking and problem-solving skills. Read on further to hear more details about the role and impact of AI in education.

Ethical AI Layoffs

A string of AI ethics teams have recently been fired. Amid larger waves of layoffs, Meta, Microsoft, Amazon, Google, and others have downsized their “responsible A.I. teams”. That’s despite large funds being invested into related teams and projects. In fact, academic research has exploded with interest in AI ethics. The 2022 Conference on Fairness, Accountability, and Transparency received 772 papers, more than double the previous year’s submissions.

OpenAI’s Content Moderation

Meanwhile, low wages and rough working conditions are available to Kenyans, if they agree to work in OpenAI’s content moderation team. It’s strange to contemplate how we still require this kind of work to be done by people in order to fuel so called ‘artificial intelligence’

You can read more about the pitfalls of ChatGPT and other large language models below.

The Concerning Rise of AI Surveillance Tech

These past two years have marked an explosion in AI surveillance technology. The watchful eye of Big Brother looms bigger and sees further than ever before. The Glass House Effect can cause a sense of pessimism in persons who are subjected to such unrestrained monitoring. In such circumstances, solitude is conspicuously absent, and privacy is considered a thoughtcrime.

Read this report of the latest and most worrying AI-surveillance technology, covering topics like China’s social credit system, Amazon’s Rekognition, Clearview AI’s controversial use of facial scanning and more.

Achieving Fairness with Data Centricity

In order to achieve fairness in AI systems, there must be a focus on the data. This approach focuses on developing simpler models in conjunction with high-quality data samples. By concentrating on the quality and representativeness of the data, we can ensure that AI models are trained on diverse and unbiased datasets, which significantly reduces the risk of perpetuating unfair biases.

Simpler models, on the other hand, enhance transparency and interpretability, allowing stakeholders to better understand the decision-making processes of AI systems. Furthermore, engaging with the target population throughout the data gathering process creates opportunities for valuable insights and feedback, which can be used to refine AI models and address potential issues proactively. This combination of well-curated data and simpler models promotes AI fairness by fostering inclusivity, mitigating discrimination, and encouraging more equitable access to the benefits of AI technology. Going further, you can inspect the AI’s performance across marginalized demographic groups, and then determine where its weakness or bias maybe present.

For example, maybe there just isn’t enough data for a certain ethnic group, and so this raises the need to collect more data for them. Or perhaps there has been mislabelling bias. This occurs when the labels assigned to data samples are incorrect or inconsistent, leading to a distortion of the true relationships within the data. This misrepresentation can be a result of human error, ambiguity in the labelling process, or even intentional manipulation. When this bias is present, AI models may inadvertently learn and propagate these errors, ultimately harming the fairness of the system.

Imagine a cartographer meticulously charting a map to represent diverse landscapes accurately. Similarly, data gathering and relabelling are crucial processes in AI development that help refine and expand the 'maps' of human experiences. By reassessing and adjusting the data used in AI models, we ensure that the underlying information is up-to-date and accurately reflects the ever-evolving terrain of our society. This ongoing attention to detail helps create more inclusive AI models, allowing for equitable decision-making and fostering a fairer world.

Read more about data-centricity here.

Law and Fairness in AI

Fairness, the equal treatment and opportunity for all, is a necessary consideration for real world AI systems. In this context, various laws, such as Title VII of the Civil Rights Act of 1964, the Age Discrimination in Employment Act (ADEA), and the Americans with Disabilities Act (ADA), protect specific classes of individuals from discrimination based on race, colour, religion, sex, national origin, age, or disability.

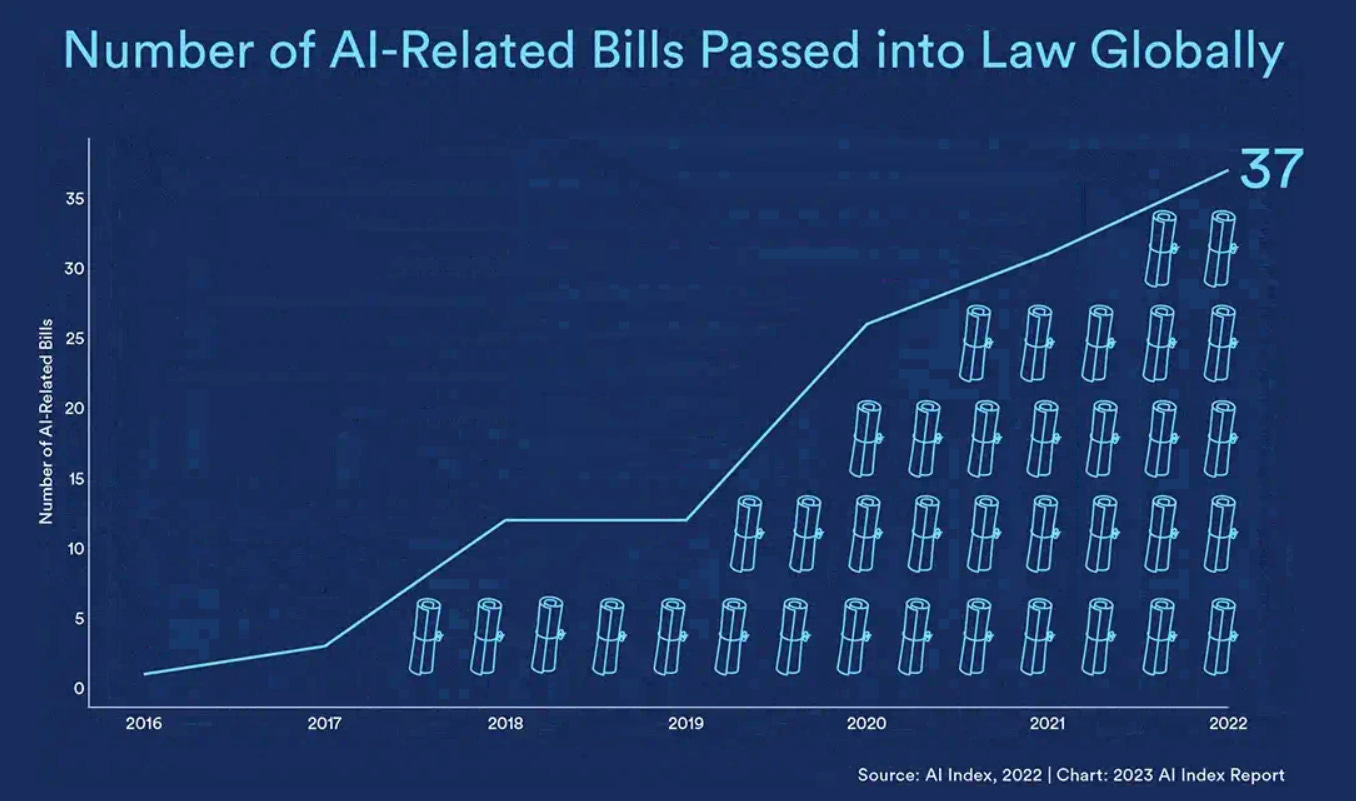

Over the past two years, there has been a significant increase in global government scrutiny of artificial intelligence (AI) technologies, with various AI-focused laws and regulations being proposed and enacted. This growing regulatory momentum highlights the need to address the complex challenges and ethical implications of AI systems and tools. Among them is the EU Artificial Intelligence Act (AI Act), as the proposed US AI Bill of Rights.

Just a few months ago, the European Union's AI Act, the first major AI regulation, successfully overcame significant obstacles on its way to becoming law. The AI Act is expected to set a crucial precedent for future risk-based regulatory approaches not just in Europe, but worldwide. This comprehensive legal framework aims to ensure that AI technologies are used responsibly, transparently, and in a manner that respects human rights. In the United States, there is currently no comparable governance framework to the EU's AI Act. However, the Biden administration has taken a step towards defining a rights-based regulatory approach with the proposal of a "Blueprint for an AI Bill of Rights." This initiative seeks to establish guiding principles for the development and deployment of AI systems, focusing on protecting individual rights and promoting transparency, accountability, and fairness. State and local laws in the US include the California Consumer Privacy Act (CCPA) and the Illinois Biometric Information Privacy Act (BIPA) are two notable state-level regulations that have implications for AI technologies, particularly in terms of data privacy and biometric data usage. There have also been bans on facial recognition and robotic police units in states like Los Angeles.

By crafting and enforcing policies that align AI systems with fairness and discrimination laws, such as Title VII, ADEA, and ADA, we can foster an environment where AI serves as a harmonious and supportive thread within the societal web, contributing to the well-being and fair treatment of all. This is not only a way to make society better and more inclusive but may be a legal regulation from various levels of government, especially from countries with EU influence.

Earlier this year, the same Italian regulator deemed the AI chatbot Replika a threat to emotionally vulnerable individuals. The regulator ordered the developer to cease processing Italian users’ data or face a €20 million fine. Back in 2022, British, French, Greek, and Italian authorities issued concurrent fines to Clearview AI, which provides face recognition services to law enforcement agencies, and ordered the company to delete personal data that described their citizens. The same year, U.S. regulators ruled that Kurbo, a weight-loss app, violated a 1998 law that restricts collection of personal data from children under 13. The developer paid a fine, destroyed data, and disabled the app.

The danger of AI regulation lies in the potential agenda of government personnel and lobbyists. It may be that Acts emerge, that stifle innovation, as well as individual freedom to use and develop AI. Read this to learn more about recent Acts that have been put in place to control and restrict technology, for good or for evil.

Influence of Geography and Class on AI Adoption

Imagine two neighbouring towns, one with gleaming skyscrapers and bustling tech hubs, while the other has decaying infrastructure and limited access to technology. This stark contrast represents the digital divide created by geography and socioeconomic class, perpetuating inequality in AI adoption and benefits. It is not only between countries that such inequality exists. In the United States, the rural-urban digital divide persists, with rural areas having limited access to high-speed internet, hindering their ability to fully participate in the AI-driven digital landscape.

The ripple effect of the digital divide can be visualized as a stone thrown into a pond, causing concentric circles of impact. The initial splash represents the direct consequences of limited AI access, while the expanding circles signify the implications for AI fairness and inclusivity. Without representation from diverse populations, AI models may propagate bias and reinforce existing inequalities. 2. Real example: A facial recognition system trained primarily on images of individuals from higher-income, urban areas may struggle to accurately identify individuals from underrepresented, lower-income communities, leading to unfair consequences in areas like law enforcement and job recruitment.

Addressing the disparities in AI access and understanding is like navigating a winding mountain road, requiring patience, persistence, and a well-planned route. Governments, businesses, and communities must collaborate to close the digital divide, ensuring that AI benefits reach every corner of society. In 2019, Kenya launched the Digital Literacy Program, an initiative aimed at introducing digital technology to primary school students, regardless of their socioeconomic background. This program serves as a blueprint for promoting digital inclusion, preparing future generations for the AI-driven world.

In sub-Saharan Africa, less than 30% of the population has access to the internet, compared to over 90% in North America and Europe. This disparity creates a significant obstacle for AI adoption and development in the region. In India, startups like Niramai are leveraging AI for good, developing low-cost, non-invasive breast cancer screening technology that has the potential to save countless lives in underserved communities.

Read this newsletter to learn more about how developing countries can benefit from data curation and data ecosystems.

The Challenge of Representation

Imagine a picture of the countryside - while it may capture the essence of the scene, it remains a mere representation, unable to encompass every detail and aspect of the real world. Similarly, AI models create lower-dimensional representations of marginalized groups, capturing only a fraction of their true complexity. As AI seeks to simulate human experiences and social dynamics, it risks creating a distorted mirror, reflecting only an oversimplified version of the real thing.

In attempting to represent the intricacies of human society, AI models can inadvertently create a "flat" picture, like a two-dimensional photograph that cannot fully capture the depth of a three-dimensional object. This oversimplification may lead to misrepresentation and perpetuation of stereotypes. Marshall McLuhan, Canadian Philosopher and pioneer of media theory, makes the following observation about representation of marginalized groups in electronic media:

It is this implosive factor that alters the position of the African American, the teenager, and some other groups. They can no longer be contained, in the political sense of limited association. They are now involved in our lives, as we in theirs, thanks to the electric media.

This is the Age of Anxiety for the reason of the electric implosion that compels commitment and participation, quite regardless of any ‘point of view.’ The partial and specialized character of the viewpoint, however noble, will not serve at all in the electric age. At the information level the same upset has occurred with the substitution of the inclusive image for the mere viewpoint.

Sustainable AI

It is not enough to consider equitable or fair AI in terms of people and not the environment. Sustainability is the intersection between social and environmental vectors. Also, as environmental challenges and hazards rise, marginalized groups will be disproportionately affected

While training large AI models continues to have an outsized carbon footprint, evidence suggests that this could change. In 2022, training BLOOM emitted as much carbon dioxide as an average human would in four years. In 2020, training GPT-3, which is around the same size, emitted more than an average human would in 91 years.

Addressing Bias in AI

To build more inclusive AI models, we must first recognize and address different types of biases that can manifest in AI, such as sampling bias, measurement bias, and algorithmic bias. For instance, the biased training data in hiring algorithms may favour candidates with certain backgrounds, exacerbating existing inequalities in the job market. There are many stages when creating an AI initiative, from data collection to modelling to deployment. Even after a model is assessed for fairness, it needs to be continuously deployed. The new stream of data, from a different sample or group, may be different from the sample the model was trained from. The world itself is constantly changing.

Mitigation of Bias in AI

Strategies for mitigating bias include increasing the diversity of training data, involving experts from various fields in the development process, and applying fairness metrics to assess and correct model outcomes. This is why I am committed to sharing information and workshops on these topics, in order to garner more interest in understanding and building systems to ensure fairness in AI.

In general, training data has the largest impact on the model’s performance. There needs to be a high quantity and wide variety of samples in the dataset. Each subgroup may not be sufficiently represented, especially if there is a majority class present. If there is such a disparity, then additional data can be collected to supplement these subgroups, at the cost of time and money.

The importance of collaboration with diverse stakeholders can never be stressed enough. Involving marginalized communities in AI development is akin to inviting them to paint their own portraits, providing a more authentic and accurate representation of their experiences. One notable example is the collaboration between indigenous communities and researchers in Australia to create AI tools that preserve and promote indigenous languages and cultural knowledge.

Cultivating a global AI ecosystem that benefits everyone is like planting a diverse garden. It requires the collective effort of developed and developing nations to nurture and sustain growth, ensuring that AI technologies reflect the needs and experiences of people worldwide. The AI for Good Global Summit, organized by the International Telecommunication Union (ITU) and other partners, brings together governments, businesses, NGOs, and academia from around the world to discuss ways to harness AI's potential for the benefit of all, including addressing the challenges faced by developing countries.

By promoting a more nuanced understanding of human experiences in AI models, we pave the way for a future where technology serves as a powerful tool for social good, ensuring fairness and inclusivity for all.

Noise versus Bias

In his work on decision-making, psychologist Daniel Kahneman highlights the concepts of "noise" to emphasize the importance of recognizing and addressing variability in judgments and predictions.

Consider the judge who is assigned a case. In a 1974 study of 50 judges setting sentences for identical (hypothetical) cases found that “absence of consensus was the norm”. The sentences varied wildly, like a judge lottery. The heroin dealer was sentenced to anything between one and 10 years, a bank robber received sentences ranging between five and 18 years, while an extortionist faced anything between three years with no fine at all to 20 years plus a $65,000 fine. According to authors of Noise,“real-life judges are exposed to far more information than what the study participants received in the carefully specified vignettes of these experiments”.

There is a risk that noise can also make our datasets and automated decision-making. One solution is to standardize decision-making processes by using algorithms or checklists that reduce the influence of individual judgment. Alternatively, to use multiple judges or evaluators to average out individual variations in judgment.

Agony of Deepfakes

Deepfakes, the product of sophisticated artificial intelligence algorithms capable of generating eerily realistic images and videos, have experienced a rapid rise in both prevalence and quality over the past 5 years.

This surge in questionable uses of AI technology has sparked widespread concern, with a clearing house documenting a staggering 260 incidents in 2022 – a twenty-six-fold increase compared to just ten years prior. Among the most alarming cases are a deepfaked video of Ukrainian President Volodymyr Zelenskyy urging citizens to capitulate to Russian forces, a blatant attempt to manipulate public opinion and undermine national sovereignty.

Rights for AI as a new species

The concept of rights for AI has gained traction as artificial intelligence becomes increasingly integrated into society, prompting discussions around the personification of AI and its ethical treatment. Anthropomorphizing machines and regarding AI as a new "species" in the digital ecosystem has raised the question of whether AI should be treated with respect and dignity, much like an integral thread in the tapestry of human society.

As AI continues to develop, its potential for consciousness becomes a central topic of debate. The line between human and artificial intelligence grows increasingly blurred, with AI exhibiting learning, reasoning, and creative capabilities that mirror human intellect. This development may eventually lead to AI achieving consciousness, evolving like a budding flower with each technological advancement.

Real-world examples of AI applications, such as AI-generated art and music, as well as AI's role in decision-making processes like legal systems, shed light on the complexities of authorship, ownership, and autonomy. As AI continues to occupy roles within the workforce, the question of labour rights and protections for AI workers becomes increasingly relevant. Read this newsletter to learn more about IP and Copyright law around AI-generated content.

Conclusion

As we navigate the rapidly evolving landscape of artificial intelligence, it is crucial to imagine a future where AI serves as a tool for promoting fairness and inclusivity.

In our journey toward this equitable AI future, we must remain vigilant in addressing the limitations of data points and simulacra, as well as the various biases that may lurk beneath the surface of our algorithms. We can learn from real cases, such as the biased facial recognition systems that disproportionately affect people of colour, or the AI-driven hiring tools that inadvertently perpetuate gender inequality. These examples serve as powerful reminders of the consequences of unchecked bias and the imperative to strive for AI that is fair and just.

By fostering collaboration among AI developers, businesses, policymakers, and diverse stakeholders, we can work collectively to illuminate the shadows in our digital mosaic and ensure that our AI technologies truly represent and serve the needs of all members of society. In doing so, we will build a brighter and more equitable future in the era of AI

Interesting list. I think robust AI legislation could partially address such issues.