When Big Tech Takes A Clear View

Harvesting photos from social media without consent is bad enough, but Clearview AI uses this data to identify you to governments, advertisers and anyone else who can afford their services.

“What’s your name? Where do you live? What do you like to EAT?”

- Colin the Computer

Table of Contents:

Introduction

Taking a Clear View of Legal Challenges

The Risks of AI-driven Surveillance

The Security Breach Revelation

Big Tech: Partners in Crime

The ACLU Lawsuit

The Erosion of Civil Liberties

A Questionable Character: the CEO of Clearview

Bias and Social Inequity in AI

Conclusion

Introduction

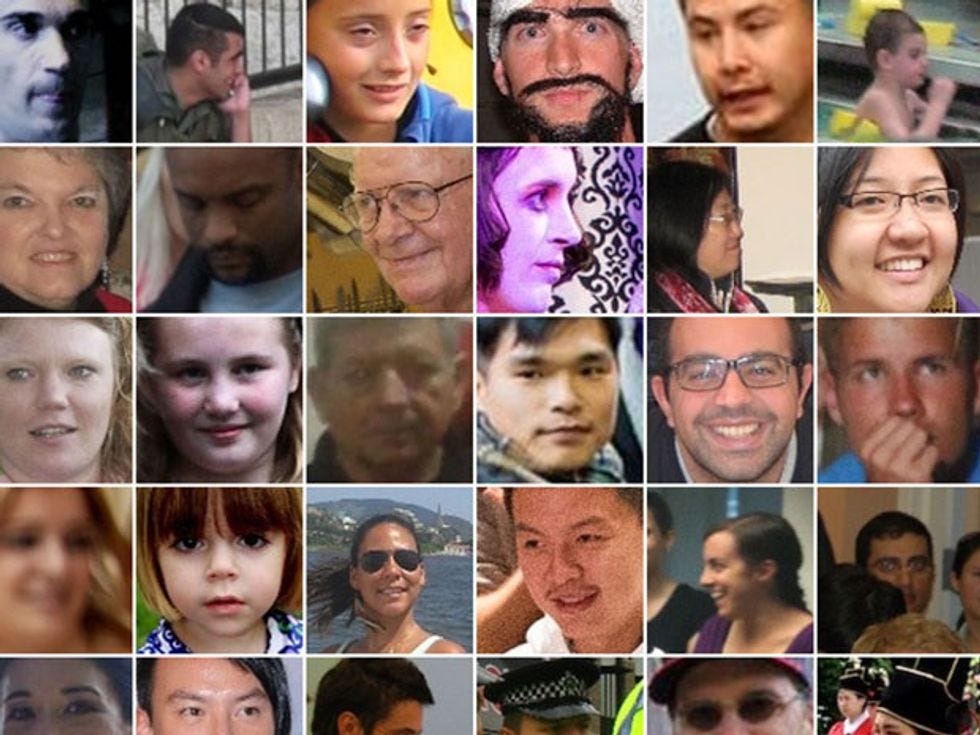

Clearview AI has amassed an unparalleled database of facial images, without knowledge or consent. With over 30 billion photos, there’s a good chance that you, dear reader, are in their database. Their use of this data reveals an unprecedented erosion of civil liberties.

Drawing intense global scrutiny, the company grapples with mounting legal challenges from the US, Canada, and numerous European nations. Beneath these legal confrontations lie deep-seated concerns: Are our rights, liberties, and freedoms at stake? As we delve deeper, the magnitude of these issues becomes undeniably pressing, forcing us to confront the boundaries of technological overreach. Given the connection between Clearview and state police, it raises serious risks for marginalized groups, who may be targeted, or, at the very least, suffer disproportionately from tech-driven policing.

To be clear, these photos are not public data. They weren’t collected based on any agreement we knowingly made with a service provider. This start-up has a vast database subsuming images from various social media platforms. Twitter, Google, YouTube, LinkedIn, Venmo and Meta have all sent cease-and-desist letters to Clearview AI demanding they stop scraping photos from their platforms without user consent. This violates the data policies of these companies, which prohibit collecting user data for surveillance purposes without permission. But we find that tech companies don’t mind risking fines because the costs are outweighed by the benefit. Many of us in the 21st century have become complacent, knowing that our data is being constantly collected and used, but the scale and impact of this is getting out of hand.

Taking a Clear View of Legal Challenges

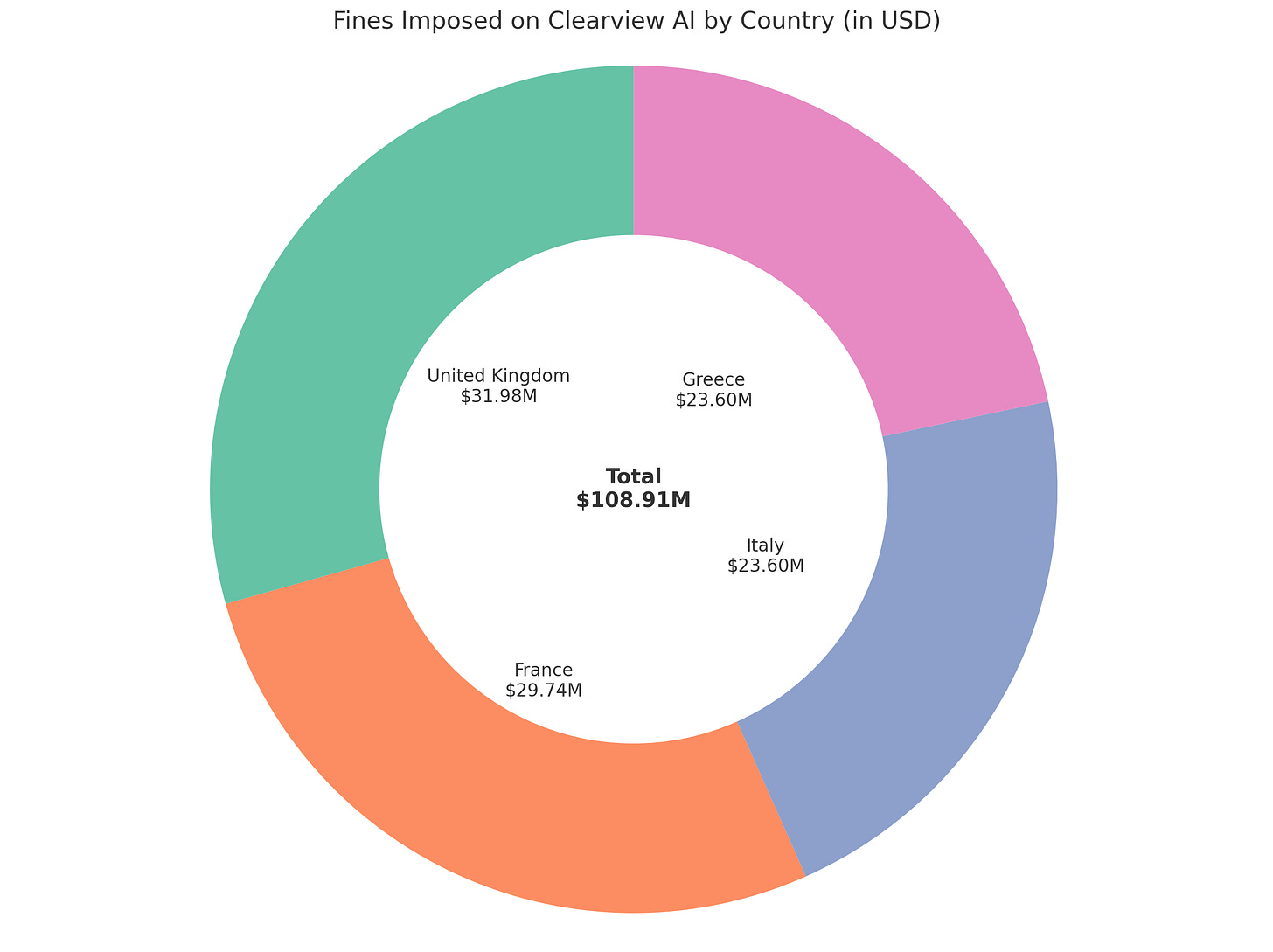

Clearview AI has faced legal challenges across the globe. In Canada, their data scraping was deemed a violation of privacy rights, leading to a cessation of services. Australia found them in breach of the Privacy Act due to unauthorized data collection. The UK and Wales jointly investigated their data scraping practices, while France and Italy initiated their own probes, with Italy banning the processing of its citizens' biometric data. Sweden fined its police for using Clearview AI, citing unlawful use. In the European Union, the Hamburg Data Protection Authority preliminarily deemed Clearview's biometric profiles of Europeans illegal, requesting data deletion for specific cases.

When technology gets better at surveilling, tracking and identifying us, the question of its usage becomes fundamental to our rights to privacy and liberty. Offering its cyclopean eye to law enforcement, Clearview magnifies many risks and dangers that come with unchecked usages of AI technology. And while some see facial recognition as an inevitable crime-fighting necessity, the company’s practice of scraping billions of images without consent signals an emergent threat to civil liberties.

The Risks of AI-driven Surveillance

Behind our backs, Clearview has harvested images from over a hundred million different people, if not more. This has been the fodder for the world's largest facial recognition database, capable of instantly identifying anyone from another photograph or video. Tracking activists, protesters and dissidents has never been easier.

Take a look at past surveillance programs like COINTELPRO. Back in the 1950s, the FBI had launched a surveillance operation and later, a violent suppression of civil rights groups, nationalist organizations, feminists and anti-war activists. Their tactics of false imprisonment, unauthorized surveillance, and smear campaigns grossly violated due process, privacy, free speech, and cruel and unusual punishment protections. Attempts to drive targets like Martin Luther King Jr. and actress Jean Seberg to suicide were among the most drastic measures showing intelligence agencies' dramatic overreach, requiring reforms to safeguard citizens' rights.

The nadir may have been how the FBI and the Chicago police staged a raid on the national spokesman for the Black Panther Party's, shooting him in his apartment. Imagine what life would have been like if COINTELPRO had access to powerful facial recognition technology. It may be too late: the FBI and other government surveillance group have already partnered with or used Clearview’s algorithms. While COINTELPRO targeted groups like the KKK, they also readily targeted human and civil rights activists. This is relevant to Clearview, since they operate in a largely unregulated space. There are no checks on law enforcement searches of the database that could reveal detailed information about people's lives and associations. It could be used for tracking immigrants, targeting protesters, or harassment of individuals.

"Allowing machines to make life-or-death decisions is an assault on human dignity," warns Amnesty International. While the decision is ultimately made by a person, the algorithms nonetheless influenced the person to make that decision. Clearview reflects Big Tech's past recklessness with data. But the harm this time could be much greater. Their shadowy rise reflects the corporatist agendas of its billionaire libertarian backers, who understand that data privacy risks are more of an operational cost than an actual necessity to overcome.

The Security Breach Revelation

In 2020, a security breach exposed Clearview's client roster, unveiling the police departments and firms utilizing their services. The servers holding this sensitive biometric information present attractive targets for potential cyber breaches. Should this data end up in the wrong hands, it could facilitate substantial identity theft and fraudulent activities.

That same year, Clearview AI disclosed that a security flaw had leaked access to its client list and number of user accounts. The data breach exposed details about law enforcement, government agencies, and private companies using Clearview's facial recognition technology across North America, Europe, and Asia. The tech has been deployed across 27 different countries, and the company revealed that it’s used by over 2,400 law enforcement agencies.

Big Tech: Partners in Crime

Big tech in general has struggled to build protections for privacy and methods to mitigate bias. Take Peter Thiel's control over Facebook's board, when he allowed Clearview to exploit Facebook code and data to scrape images without consent. Thiel's ideology of a technology-dominated libertarian society is seen as aligned with Clearview's disregard for privacy. A similar controversy from Amazon can illustrate some key points here about how tech companies often overlook issues of bias and discrimination.

Amazon's facial recognition tool Rekognition has faced scrutiny over racial and gender bias. A 2018 ACLU report highlighted issues with Rekognition falsely matching members of Congress with arrest photos, disproportionately those of color. This sparked controversy about the technology's biases. In a nutshell, very ofte, machine learning algorithms, in particular, facial recognition, don’t work well on dark-skinned people. It goes further than that, though. There is under-representation of the some demographics within training data, along with racial skews in databases such as mugshot records. Clearview claimed its technology matched faces equally well across races, implying it used the same methodology as the ACLU's Rekognition study. In the “Bias and Social Inequity” chapter of this newsletter, I explore the problems with these claims in some detail. One such issue is how Clearview tested their algorithms.

While ACLU used tens of thousands of mugshots, Clearview searched 2.8 billion social media photos, possibly including the Congress members' images already. This made matches easier versus police searching for someone not in the database. ACLU's study raised concerns if photos aren't present, how often will the system falsely match, especially for people of color? Clearview did not address those issues of racial bias and error rates that arise in real-world policing situations. As of today, there is positive evidence of the technology disproportionately targeting innocent Black people. So far, all the false arrests that leveraged Clearview’s tech have been on Black people.

The American Civil Liberties Union Lawsuit

In May 2020, the American Civil Liberties Union (ACLU) filed a lawsuit on behalf of advocacy groups representing domestic violence victims, undocumented immigrants, and sex workers. The defendants named in the lawsuit are Clearview AI, a facial recognition technology company. The lawsuit accuses Clearview of violating the Biometric Information Privacy Act, an Illinois state law. This law prohibits private companies from capturing, obtaining or storing biometric data from state residents, including detailed facial maps, without their informed consent.

“This is a huge win for the most vulnerable people in Illinois,” said Linda Xóchitl Tortolero, a plaintiff in the case and the head of Mujeres Latinas en Acción, an advocacy group for survivors of sexual assault and domestic violence. “For a lot of Latinas, many who are undocumented and have low levels of IT or social media literacy, not understanding how technology can be used against you is a huge challenge.”

A Questionable Character: the CEO of Clearview AI

Before Clearview AI, CEO Hoan Ton-That was involved in ventures that compromised email accounts and spread disinformation. His hint at Clearview's move towards augmented reality and real-time facial recognition has raised alarms about potential widespread surveillance. The company's leadership is criticized for their unclear handling of data, especially concerning marginalized groups. Despite concerns, they've resisted third-party checks and often compare their vast data scraping to simple search engines. He also mentioned they’re allowed to ingest all this data under “the First Amendment”, the first part of the US Constitution that covers free speech, rather than, you know, using people’s photos from the Internet without their consent or knowledge.

Ton-That is a highly manipulative individual in interviews, citing many misleading claims, flagging up the tool’s accomplishments as a response to valid criticism of the company. He displays a narcissism not uncommon with a certain type of tech person who has achieved power and money without necessarily being the creator of the product giving them this influence.

Furthermore, Consumer watchdog groups have admonished Clearview's excessive claims that its technology can prevent manifold crimes and acts of terrorism, which lack substantive evidence. Their lackluster response to global legal challenges demonstrates the destructive nature of the technocratic philosophy of “move fast and break things.”

Bias and Social Inequity

Are there crimes that were solved with help of the tool? The answer is yes, there are probably hundreds, and, given enough time, thousands of cases that owe their success, partially, to Clearview. However, consider one major application, where the police use Clearview's algorithm to match a line-up of mugshots to someone from their database. Yet, as previously mentioned by Hoan himself, the Innocence Project notes that around 70% of known wrongful convictions involve eyewitness misidentification. Recently, there was a case of a pregnant African-American woman, who, just a few months ago, was misidentified from an eyewitness examining a line-up of possible suspects. Her mugshot was there because she drove with an expired license several years ago. Thus, the model's false matches may inadvertently bolster an eyewitness misidentification. Then, even with Clearview’s high scores for overall accuracy, do we expect Clearview’s algorithms to have higher false matches for some demographic groups than others?

Ton-That made a highly unreliable and dishonest post about this, relying on information from a 2019 report by National Institute of Standards and Technology (NIST) on Facial Recognition Technology (FRT). He quotes the paper, mentioning that “Most accurate algorithms producing many fewer errors than lower-performing variants. More accurate algorithms produce fewer errors, and will be expected therefore to have smaller demographic differentials.”

His implication is that higher overall accuracy implies reduced risk. At the least, he uses the word “accuracy” loosely, which can be deceiving as there are different metrics that one could refer to as “accuracy” and “accuracy” as a specific metric could be useless, depending on how the testing dataset was designed. In this case, there should be considerations for “false matches”, where someone’s face was compared to others in the database, and matched with someone that wasn’t them. False matches are referred to here as false positives. The very paper he cites states that “Using the higher quality Application photos, false positive rates are highest in West and East African and East Asian people, and lowest in Eastern European individuals.” It goes on to say, “Our main result is that false positive differentials are much larger than those related to false negatives and exist broadly, across many, but not all, algorithms tested. Across demographics, false positive rates often vary by factors of 10 to beyond 100 times.”

The Florida Institute of Technology showed that certain verification algorithms resulted in elevated false positives and reduced false negatives in African Americans compared to Caucasians. The Times reports the Detroit Police Department's frequent use of facial recognition, predominantly on Black men. It’s worth noting Detroit has a mostly Black population, but this further underscores the severe impact of false matching rates- the worst possible performance becomes the most likely one. There are performance results straight from Clearview’s algorithm in 2019, listed on NIST's vendor assessment. The false match rate is almost 500x larger for West African females than it is for Eastern European Males. While there may always be differences between two subgroups, due to the data and even social and geographical features, it is clear that this is a biased outcome. Even if the term bias is to be considered in terms of social or cognitive bias, this outcome leads to downstream discrimination and false incarceration rates. After all, it's a system that helps out one someone from one group demographic and harms another demographic group disproportionately. It's easy to shift accountability to the people using the tool, tuning it and making real world-decisions, but when make repeated claims about how your tool isn't biased and hasn't lead to any false arrests, only for all of the false arrests over the following years(s) to be done to Black people, the dishonesty and incoherent is apparent.

There are no shortage of reports, tests and case studies that confirm this, so it might be worth focusing instead on the specific claims Clearview.ai makes. Certainly, crimes have been solved using Clearview’s technology. The danger is not just false arrests, but in general, exacerbating social inequalities and inciting recidivism. This unjust criminal record can then lead to difficulties in obtaining employment, housing, or other essentials, pushing individuals towards criminal activities out of desperation or lack of alternatives. Furthermore, law enforcement might monitor or target individuals with past records more closely, even if those records are based on false arrests due to algorithmic errors.

The Erosion of Civil Liberties

Some argue the use of facial recognition is inevitable. They say that with regulation, we could mitigate privacy harms while still making use of its capabilities. Orwell's 1984 leaves no doubt about mass surveillance's dangers: "It was terribly dangerous to let your thoughts wander when you were in any public place or within range of a telescreen. The smallest thing could give you away.”

But privacy advocates remain skeptical. Like Big Brother in Orwell's 1984, Clearview's vast facial database watches without blinking. All while China's chilling social credit system demonstrates how facial tracking technologies can expand authoritarian control over citizens. China is developing similar facial recognition databases by scraping images from WeChat, QQ and other sources to power its mass surveillance systems. Clearview underscores complex privacy debates as technology evolves. Claims of inevitability require ethical vigilance. Orwell's 1984 leaves no doubt about mass surveillance's dangers: "There was of course no way of knowing whether you were being watched at any given moment. How often, or on what system, the Thought Police plugged in on any individual wire was guesswork. It was even conceivable that they watched everybody all the time. But at any rate they could plug in your wire whenever they wanted to. You had to live—did live, from habit that became instinct—in the assumption that every sound you made was overheard, and, except in darkness, every movement scrutinized."

Like the mythological Argus Panoptes, the 100-eyed giant who observed all, Clearview's vast facial database watches us without blinking, challenging any notion of anonymity in public spaces.

Conclusion

Clearview AI, while useful to solve some crimes, is a careless, amoral entity, with its vast database of photos harvested without consent, its feckless treatment of the law and data privacy rights, and contribution to surveillance capitalism .

The company's practices, combined with its leadership's questionable past and biases in its algorithm, emphasize the need for rigorous oversight in the AI sector. Such concerns aren't limited to Clearview. As AI becomes a more integral part of our lives, it's crucial to understand its broader applications and implications.

For those concerned with the role of careless AI applications in military operations, read on to learn more about Clearview’s more militaristic cousin, Palantir, ominously named after the 'all-seeing' artifact from J.R.R. Tolkien's Lord of the Rings. Also, for those on a quest for fairness in AI, check out my deep dive into Equitable AI below.

The Need for Equitable Artificial Intelligence

Table of Contents: Introduction Famous Controversies in AI and Fairness Achieving Fairness with Data Centricity Law and Fairness in AI Influence of Geography and Class on AI Adoption The Challenge of Representation Sustainable AI Addressing Bias in AI

The Misuse of AI in Military Operations

"What do such machines really do? They increase the number of things we can do without thinking. Things we do without thinking-there’s the real danger." - Frank Herbert

Thanks for reading Hyperopia! Subscribe for free to receive new posts and support my work.