The Misuse of AI in Military Operations

As Palantir scales up its offering of AI to governments for military advantages, concerns have arisen from world leaders and scientists alike when it comes to using AI for warfare.

"What do such machines really do? They increase the number of things we can do without thinking. Things we do without thinking-there’s the real danger."

- Frank Herbert

In an age where technology is synonymous with progress, Artificial Intelligence (AI) becomes more dangerous. Palantir Technologies develops cutting-edge software leveraging AI, making notable impacts across different sectors, from intelligence to cybersecurity. Their active role in warfare and the perspectives of their leadership prompt deep concerns for human rights, responsibility and accountability.

Palantir, with its two platforms, Palantir Gotham and Palantir Foundry, have been instrumental in various missions. From counter-terrorism intelligence and fraud investigation in the United States, to cyber-analyses at Information Warfare Monitor, Palantir's reach has remained pervasive. Note how the company has named itself after an evil artifact used by the villains of the Lord of the Rings, the ‘Palantir’,

Recently, Palantir entered into a three-year contract worth £75 million ($91.39 million) with the UK's Ministry of Defense. As Europe's most significant conflict since World War II rages in Ukraine, this deal signifies an overseas expansion of Palantir's military role. The software is designed to augment military operations, extending its utility across the defense ministry, building upon a smaller pilot initiated with the Royal Navy.

In addition to aiding real-time decision-making, Palantir's software also offers predictive analytics, charting possible outcomes of various choices. These technologies have drawn scrutiny from British privacy groups, apprehensive about potential government overreach. Since their agreement with the National Health Service (NHS), they have been challenged about the scale, nature and lack of transparency of their business practice.

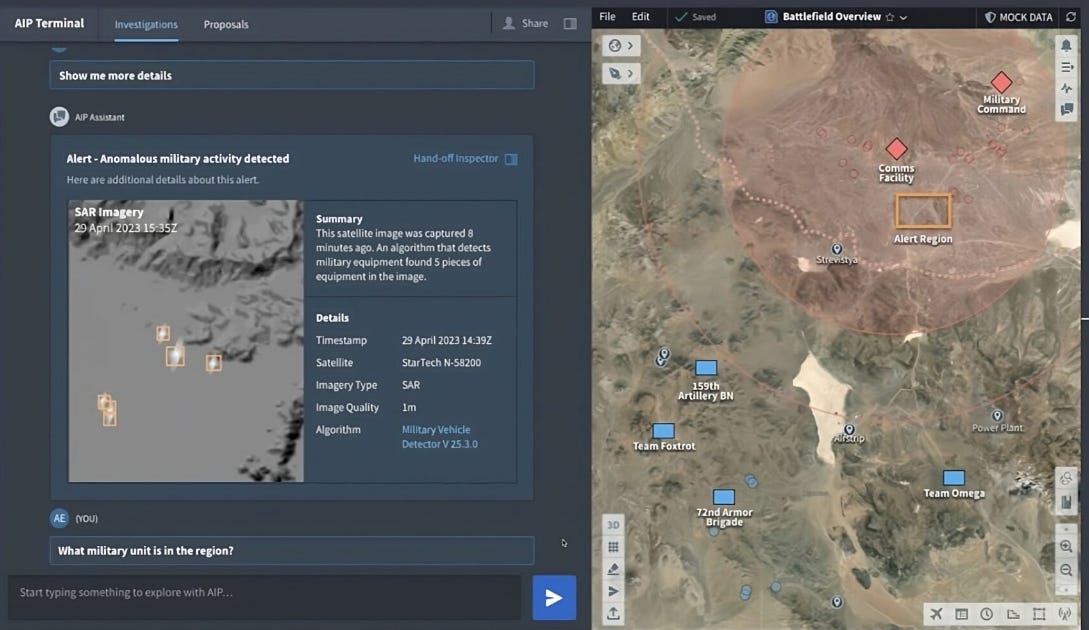

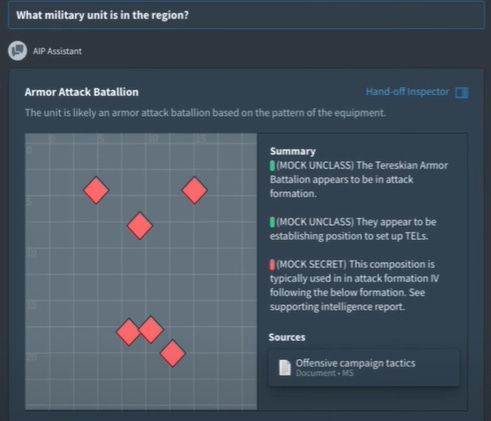

Palantir's systems are designed for optimal resource deployment, aggregating intelligence from diverse sources, from satellites to social media, visualizing military positions, and flagging enemy movements. These capabilities have been underpinned by a Palantir-powered program, Army Vantage, which has significantly influenced the U.S.'s response to Russia's war.

Yet as more obscure, intractable and uninterpretable technology becomes integrated into worldly affairs, there will be many short-term decisions that could lead to catastrophic outcomes. Consider McLuhan’s concept of the “tetrad." He proposed that every technology 1) enhances something, 2) makes something obsolete, 3) retrieves something from the past, and 4) when pushed to its limits, reverses into something else. In another case study, I discuss how overreliance on AI technology leaves us highly vulnerable to edge cases.

With Palantir, all of this technology seems to be a mere extension of military operations. The effect of this extension can be devastating, scaling up the number of mistakes or miscalculations it makes, or, if it only makes short-term considerations of human lives. Has Palantir ensured that their software fits with broader standards of safety, risk, human rights and international conventions? To answer this question, we can take look into a window of the leadership of Palantir.

In “Our Oppenheimer Moment: The Creation of A.I. Weapons”, an article written by the CEO of Palantir, the self-deprecating title does not match with the distancing he performs in the piece, where he outsources responsibility to the US government while also indicating alignment of values. “At Palantir,” he says, “we are fortunate that our interests as a company and those of the country in which we are based are fundamentally aligned”. This kind of pro-nationalist, pro-war worldview is also being embodied by other tech companies too, known for their problematic surveillance and strong connections with governmental agencies. In a similar vein to Clearview AI, some of his co-founders and other partners have endorsed known white supremacists with ties to the KKK.

Overall, it’s clear Alex embodies the principles of an American nationalist. This is a company that compares itself to a Lord of the Rings villain and now, Oppenheimer. This blatant disregard for their public image reflects their values and practices should serve as a warning to anyone considering using their services. In another statement, Alex rejects the idea of ethical safe-guarding of language models, saying that “A significant amount of attention has also been directed at the policing of language that chatbots use and to patrolling the limits of acceptable discourse with the machine.

The desire to shape these models in our image, and to require them to conform to a particular set of norms governing interpersonal interaction, is understandable but may be a distraction from the more fundamental risks that these new technologies present. The focus on the propriety of the speech produced by language models may reveal more about our own preoccupations and fragilities as a culture than it does the technology itself.”

There are numerous examples of the bias from large language models leading to harmful or even illegal outcomes for different populations. For example, AI may display overconfidence, hallucination, elimination of facts or evidence, demographic bias and encoded hegemonic worldviews.

Even the idea of unrestricted, unregulated and unmoderated AI models in a military context is downright terrifying. Palantir demands not only the ability to destroy another group or party during a conflict, but also demands that anything goes within this frame of destruction. Excessive causalities reduces enemy ranks but doesn’t stop conflict.

How exactly does Palantir measure the success of their models? From his article, Alex discusses the company’s function,

“The ability of software to facilitate the elimination of an enemy is a precondition for its value to the defense and intelligence agencies with which we work”. While killing more enemies is the goal of both sides in a war, but it is not clear how this reduces the length of or the cost of or the net mortality rates of the war. Given the largely automated process, with options for response, information about enemy units, and suggestions on weapons to use, has a strategy for actually solving a problem in wartime been raised? For example, non-lethal strategies that have ended wars include diplomacy, economic sanctions, cyber warfare, psychological operations, humanitarian aid, and peacekeeping missions. Yet rather than implement their AI for such missions, Palantir focuses specifically on “elimination of enemies” for their clients.

After all, war is very profitable to the United States, so their choice of metric is not surprising. They go on to tout a closer relationship for the very pro-military government of the USA, indicating an increase in the scale of military operations using advanced technology for the purpose of more casualties of enemy numbers.

The United Nations (UN) emphasizes the imperative of human judgement in any decision that involves taking human life. Accordingly, the U.S. Department of Defense has set requirements for its AI systems to be responsible, equitable, traceable, reliable, and governable. While admirable, the obvious question is raised: is this sufficient to prevent military misuse? Just by addressing the user interface, Andrew Ng of Deeplearning.ai points out that the design of user interfaces often leads individuals to passively accept automated decisions, analogous to the prevalent "accept all cookies" pop-ups on websites. So while technically, an automated system might align with the UN directives, it may also present a time-sensitive decision to its human operator without adequate context, leading to hasty authorizations can occur, lacking the required oversight or discernment.

In recent history, the Libyan Government of National Accord allegedly used autonomous drones to target retreating insurgents. Equipped with facial and object recognition, these drones would dive towards the enemy combatants, triggering an explosive device upon collision.

This example of attacks on citizens shows how unchecked technology can be used for extreme ends. The narrative of automated weaponry is far from recent, but the advent of AI has revolutionized command and control systems. The possibility of establishing an international ban on autonomous weapons still exists, though the window of opportunity is narrowing swiftly.

In a message to the Group of Governmental Experts, the UN chief said that “machines with the power and discretion to take lives without human involvement are politically unacceptable, morally repugnant and should be prohibited by international law”. Despite the UN chief’s assertion that autonomous weapons are "politically unacceptable, morally repugnant and should be prohibited by international law”.

Presently, leaders from 30 countries endorse a global prohibition on autonomous weaponry. However, major powers like China, Russia, and the U.S. have so far stalled these initiatives. Given the decreasing cost of drones and the simplification of AI development, there are fewer barriers than ever for a determined adversary to leverage these technologies. Powerful nations such as China, Russia, and the U.S. have yet to lend their support to an international ban.

It is clear the possibility for cataclysm, from the threat of war, geopolitical subjugation and military impacts, is becoming ever more likely. The lack of clear responsibility, removal of human thought in decision making, and sheer power and ability to decimate enemies and spread misinformation against enemies is a great risk to human life. While Western militaries may employ it against its enemies, it’s only a matter of time before its enemies use it against them. This could lead to a game of ever-increasing danger and complexity, as each AI system tries to outcompete, the end goal potentially being the total evisceration of the enemy population. Read on to learn more about the challenges we face with ethical AI when it comes to mitigating bias and ensuring fairness.

The Need for Equitable Artificial Intelligence

Table of Contents: Introduction Famous Controversies in AI and Fairness Achieving Fairness with Data Centricity Law and Fairness in AI Influence of Geography and Class on AI Adoption The Challenge of Representation Sustainable AI Addressing Bias in AI